Research and development in AI is an interesting concept. First off, there hasn't been much noticeable progress in AI since the 80s. Considering the fact that AI is an O(n^2) problem and computers have grown in computing power exponentially since then, one might think that progress would be outrageous. Growing up from a kid easily impressed into the harsh world where practicality and simplicity rules has taught me a bit of scepticism. When reading an article, I look for signs of flakiness, lies, and so on. When someone gives a plan that sounds too good to be true, I take a look at the numbers and I decide not to risk my reputation by supporting it vocally. But there's still something deep in my mind that doesn't want to give up on the idea that a solution to the big problems exists. It's happened before, it'll happen again. Maybe that's why I want to write video games: when I control the physics and the plot, I can just say: it is so, so let's do it. The reality of the world is that big breakthroughs come after massive investments of time and energy (including waste) on things that do and do not work. It simply will not happen if we aren't working on it. So I think it's a good thing that I have a nearly inexhaustible supply of curiosity into the cool and interesting. But simply researching things that are cool and interesting is not nearly enough. R&D into topics that are not giving results to other researchers where you might have some insight is a requirement of progress.

So I've been working on some simple AI. Really all I've written is just the infrastructure necessary for the code I really want to write. But once done the system should be pretty easy to work with. I was able to test a cops and robbers simulation at approx. realtime. That's useful. When I write an intelligent robber or cop, I'll know because they'll be more "clever" than their opponents. I wrote a short essay on how to reimplement that so that it would be more stable, so I'm slowing going to do that.

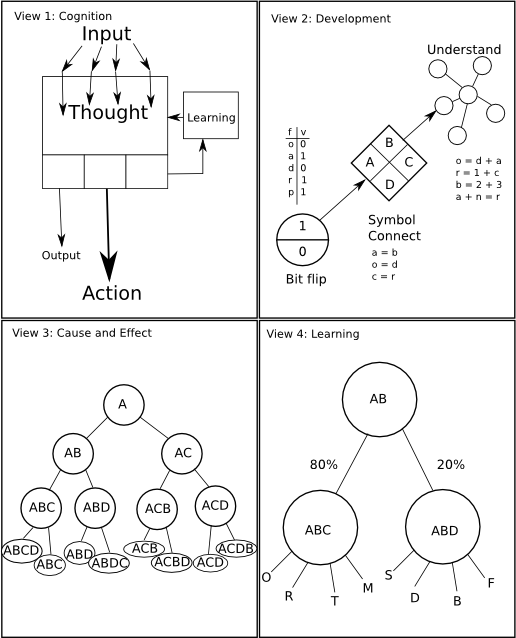

Until then, I'm working on other stuff. I had an interesting idea on symbolic logic the other day, so I have been following it by writing here and there. I drew a nice picture and digitized it for your reading pleasure below. It's funny for me because it looks like something I would have done for psych 101 had I taken it now instead of 5 years ago. But instead of being a visual aide for psych class, it's a purposely-incomplete diagram for an AI that will run through 32M thoughts to it's eventual conclusion as an adolescent cat. Okay, I just like kitties.

A brief explanation is in order. The first view is of cognition via the blackbox of thought. Input affects thought. Thought affects output and actions. Thought also is the basis for learning, which affects thought. The second view is of development. Normal living things go through stages that are easy to understand: the first where bits are flipped randomly and input is taken, a second where symbolic logic can be used to connect flipped bits to input and thus output, and a third phase where cause and effect are fully understood. I suspect that the third stage simply will not happen in a personal computer at anywhere near realtime speeds. Maybe I'm wrong. I have hope just because it'd be pretty cool. The third view is of a branched system that allows us to decide very quickly and easily whether A should require B or C as well as should AB or AC require B, C or D. But how do we decide which requires which? View 4 explains how thought creates learning. As input is processed, it is connected to the nodes that support it. The number of connections that are supported by input are given weight so that the branched system in view 3 will chose it.

The problem of convincing a person who doesn't think that robots can have intelligence can probably be solved in a properly written video game where the AI uses an advanced learning technique that has not been obviously tampered with by the programmer. Certain researchers have been successful at this already, and I hope to add my own method to this list of proper AI.

I only was able to come up with one equation tonight, so you'll have to excuse me. It's a very simple one, but it might be useful. Assume that one thought is characterized by a walk of the tree in view 3 with a set of random (but biased) choices. Assume that one input is defined by inputting the data into the tree. A mammal is limited in computation by number of conscious thoughts per second. For example, a mathematician cannot compute 2+4 and 3*6 faster than they can consciously do the math for each. So that means we can limit mammals to 1 thought per second. The fastest developing mammals are certainly not humans. My pick is cats. In a year, an adolescent cat has become as smart as an adult cat. So let's do the math:

Thought per second = 1. Seconds per year: 365*24*60*60 = 31.5M Computations required for an adolescent cat = 31.5M thoughts.So how many flops does each thought take? I'm thinking quite less than 1000. So being a good computer scientist your question is: do you think that reducing a classic O(n^2) to an O(n) will work? My answer is yes. My previous work in AI has found that correlations can be tracked over time by simple statistics as long as you reduce the number of equations.

--Javantea

Permalink

-

Leave a Reply

Comments: 0

Leave a reply »