by Javantea Sept 23, 2008

What could be more boring than a 3D box rendered in Blender with a stupid material and shader? Post processing said box in Inkscape, possibly. But this fine morning (night, whichever it is) I found that both tools are doing amazing things in just the place I need them. Blender's shading and import have improved quite a bit in the latest release, which means I can import all my assets from Hack Mars to Blender. The animation works perfectly. My assets from AltSci Cell are already in Blender and now that I know how they import and export animation, I can do more work in that area. One of the main reasons I gave up on Hack Mars and AltSci Cell (both projects are on indefinite hold awaiting interest and investment) was that I couldn't import, export, or create content in Blender. That's a pretty sad state of affairs compared to MS3D (which I haven't used since the end of Hack Mars dev).

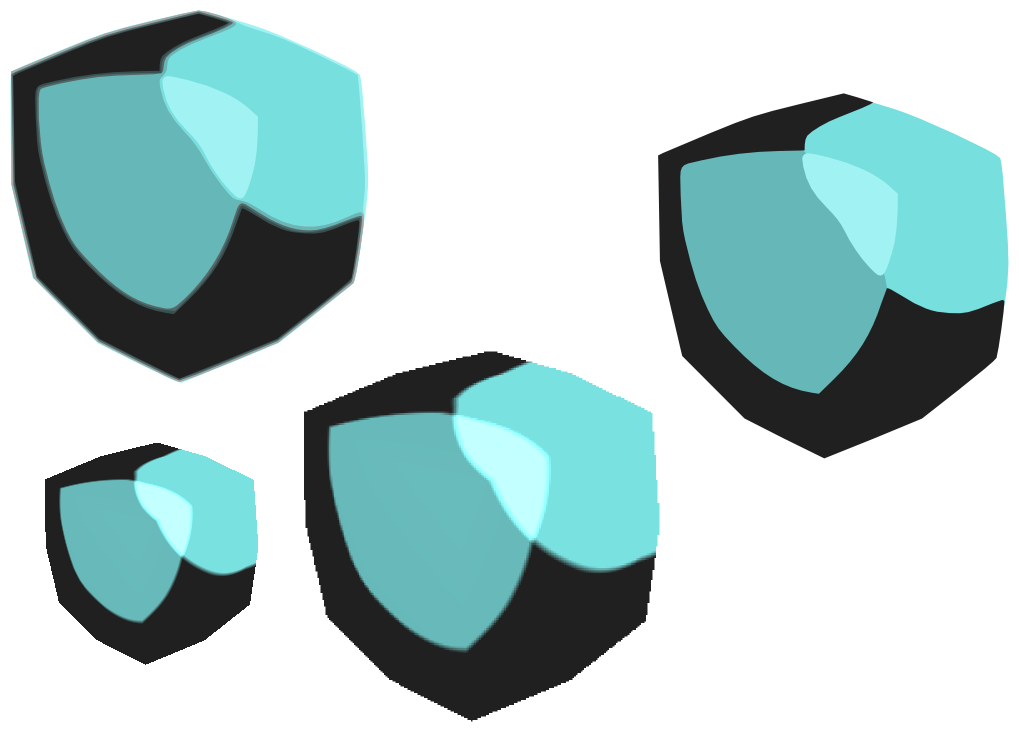

Describing the above picture so you know how it was created:

The small box in the lower left is the original output of Blender.

The box in the lower center is that same image scaled up.

The box in the upper left is the tracing output optimized for 8 colors.

The box in the upper right is my attempt at reducing the one on the left.

As you can see, the output on the upper left and right are rather interesting. I can say that optimizing the tracing output for 4 colors makes it look smoother and better.

So why would I post-process this rendering in Inkscape? Inkscape is a 2D vector graphics program, so in previous versions it wouldn't do much with raster graphics other than display them. Tracing 2D rasters into vector graphics is not only boring, but tedious and uninteresting, a process perfect for programming algorithms. What do you know, Inkscape has a Trace Bitmap function that created 2 of the 3 vector graphics shown here. I increased the resolution from 72 dpi to 150 dpi to show what vector graphics are good for: scaling without pixelation. Why would I want to scale a raster of a 3D box? Rotoscoping is a technique that takes images (usually video) and does this process and makes them simpler and/or more artistically useful. But making a cel-shaded box simpler? One interesting use is making the box smoother and less true to the 3D renderer.

So was the experiment useful? Absolutely. If I wanted to make SVGs out of a reasonable number of renderings of more interesting objects, I could use this technique either manually or automated. In fact, it may be possible to get the Blender renderer to output a PNG directly to the tracer process making it far easier to use this. I'm thinking about using this process for a 2D RPG that uses 3D assets prerendered. Why would I do such a thing when I have a good 3D engine? First I want to see if I can make a high quality prerendered 3D game on mobile phones, then we'll see where it goes. SVG is a generally interesting technology, so if the most I get out of it is a new process, it's worth it to me.

If you'd like the SVG files, the .blend files, or just need some help in Blender or Inkscape, send a line and I'll see what I can do.

Permalink-

Leave a Reply

Comments: 0

Leave a reply »